|

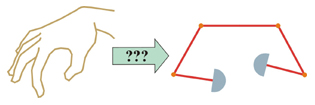

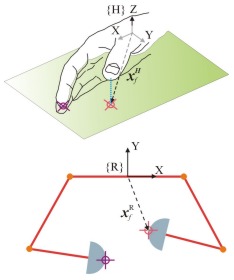

Developing a User Mapping

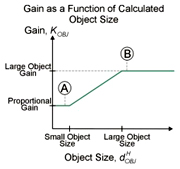

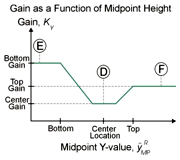

Once a user is calibrated

to the CyberGolve we then customize the mapping parameters and transformation

parameters. The transformation parameters include rotation angle and

translation offsets. Object mapping parameters include gain values,

gain blend sizes, center gain location. In all there are 17 parameters

which are modified to give the user the best utilization of the workspace

while maintaining the an intuitive feel. Using a custom Graphical User

Interface, the parameters can be modified real-time. A virtual robotic

hand display within the GUI is initially used to setup the parameters

to avoid any problems with poor mapping parameters with the robotic

hand running. Once the parameters are adjusted using the virtual display,

the user's motion is connected to the robotic hand and the parameters

are further tuned for the best possible mapping.

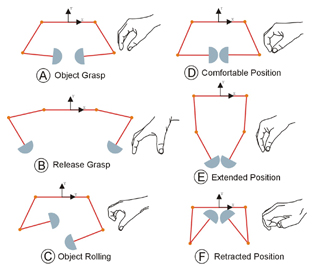

Ideally, if the mapping

parameters are adjusted to match the typical positions A,B,D,E, and

F, then the user should be able to roll a virtual object about his/her

comfortable manipulation position and the robot will in turn roll an

object about the ideal manipulation position (C) and still be able to

reach most of the robot's workspace.

Mapping Method Results

Under

the point-to-point mapping method one can see that the index finger

maps fairly well to the left robot finger workspace. However, the thumb

motion is mapped to a relatively small area roughly along a vertical

line. This is partially due to using the planar project of the thumb

motion. Also, the large number of points outside the robotic hand workspace

leads to a distortion in the mapping. The robot will go to the closest

possible position at the edge of the workspace. It is also important

to note that the natural pinch point does not match the robot's ideal

manipulation point. Under

the point-to-point mapping method one can see that the index finger

maps fairly well to the left robot finger workspace. However, the thumb

motion is mapped to a relatively small area roughly along a vertical

line. This is partially due to using the planar project of the thumb

motion. Also, the large number of points outside the robotic hand workspace

leads to a distortion in the mapping. The robot will go to the closest

possible position at the edge of the workspace. It is also important

to note that the natural pinch point does not match the robot's ideal

manipulation point.

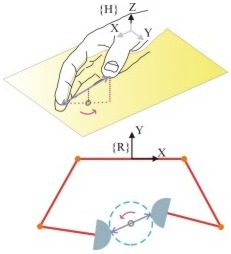

Under the object-based mapping

method the index finger motion lies almost completely within the robot's

workspace. More importantly, the motion of the right finger, the thumb,

has been greatly expanded. Also, the pinch point matches the ideal manipulation

position directly.

Method Conclusions

The object based mapping method

does show considerable improvement over the point-to-point method. However,

there are some drawbacks associated with the method. The motion of the

thumb and index finger are coupled, thus individual finger exploration

is difficult. Also due to the large number of parameters there are varying

degrees of success.

Mapping Method Extensibility

While object mapping method concept

has been demonstrated using a particular robotic hand, the mapping method

is a general technique and is extensible to any planar robotic hand.

By modifying the mapping parameters, particularly the nonlinear gain

functions, the motions of the human hand can be mapped to a new

robot workspace. One caveat that must be observed when performing the

nonlinear mapping is that, in some cases, relatively small motions of

the human fingers could result in large motions of the robot fingers.

Thus it may be desirable to plot corresponding velocity ellipsoids.

|

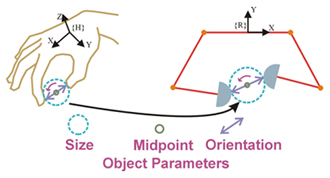

The

size of the virtual object is calculated from the 3D distance between

the thumb and index finger.

The

size of the virtual object is calculated from the 3D distance between

the thumb and index finger.